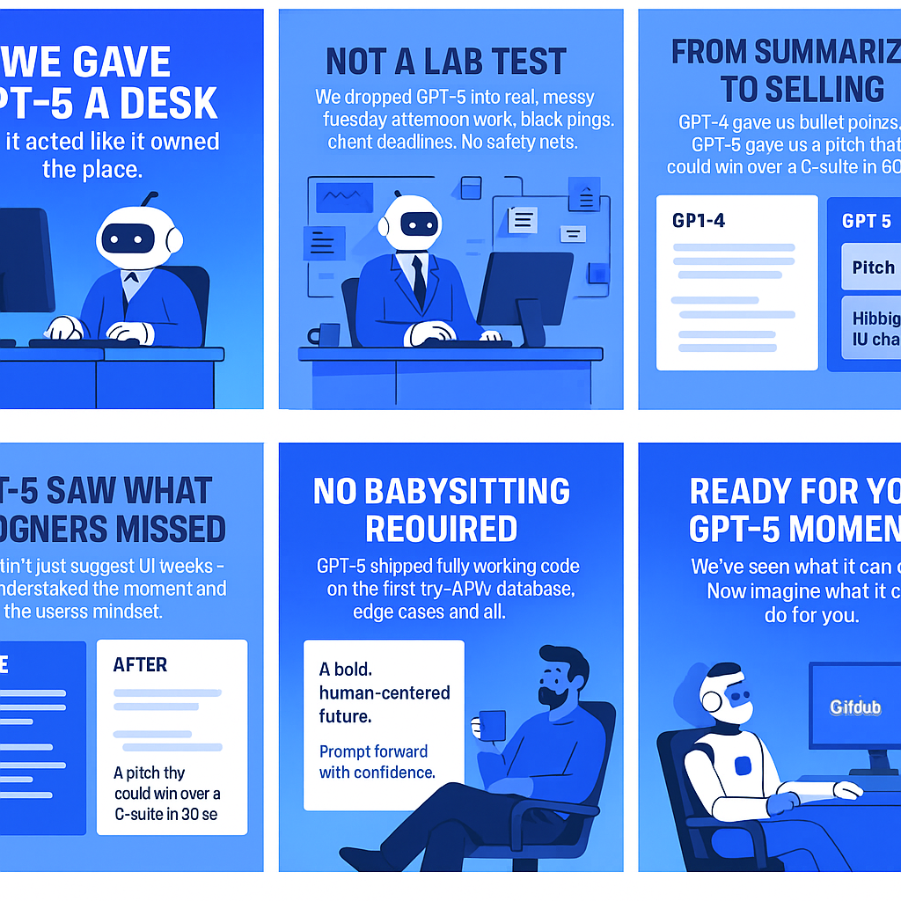

We gave GPT‑5 a day in the office. It might deserve a promotion.

We tested GPT-5 on real business tasks for a day. Here's what surprised us, what flopped, and what's genuinely useful.

We’ve all seen the GPT‑5 hype: smarter, faster, almost freakishly capable. Most reviews stop at abstract benchmarks or cherry-picked examples that don’t survive in the real world.

So, we wanted to know something else: What happens when you drop GPT-5 into actual, messy, everyday workflows? Not a sterile lab test, but the kind of tasks your team runs on a Tuesday afternoon, under deadline, with Slack blowing up in the background... We gave it a desk, a few real tasks from our clients, and let it prove itself.

Let’s just say… we weren’t ready for how good it is! We ran the new model through a set of real tasks we’d previously handled using older models, and the difference was obvious!

We’ve included the results side by side, so you can see for yourself.

GPT‑5 vs your designer’s UX eyes

We dropped GPT‑5 into our sales automation platform like it just joined the product team. Here's the exact prompt we gave it:

Here’s a screenshot of our SaaS app for automating sales outreach. What are 3 UX issues you notice? Assume this is an early-stage product and we’re preparing for demo day. Give specific suggestions for improvement, and explain why each change would matter to the end user (startup founder or SDR).

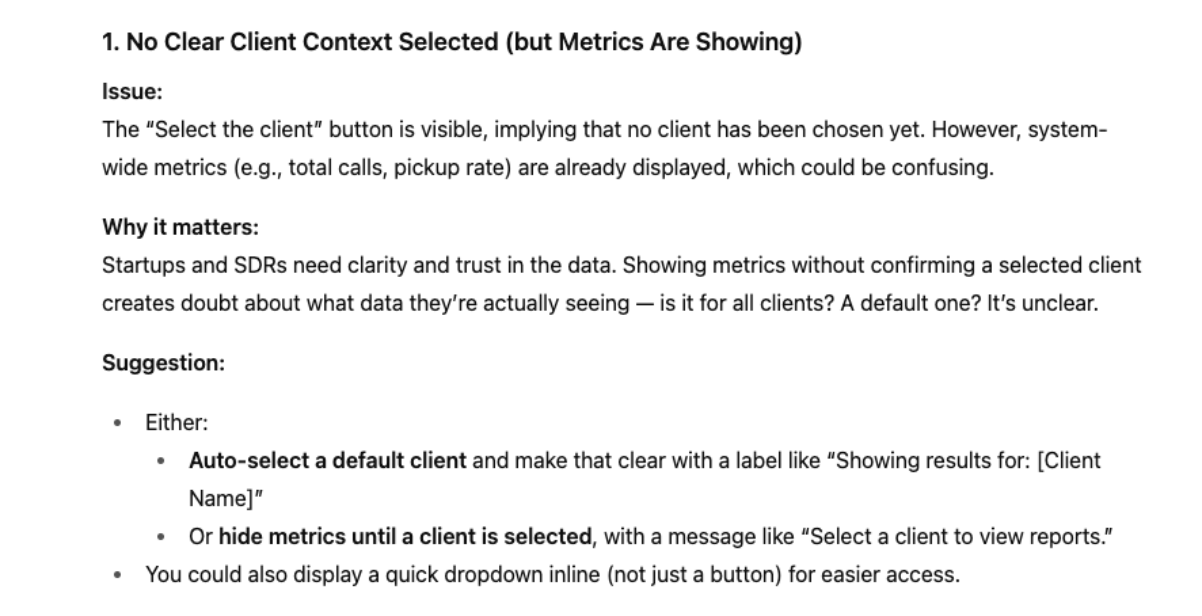

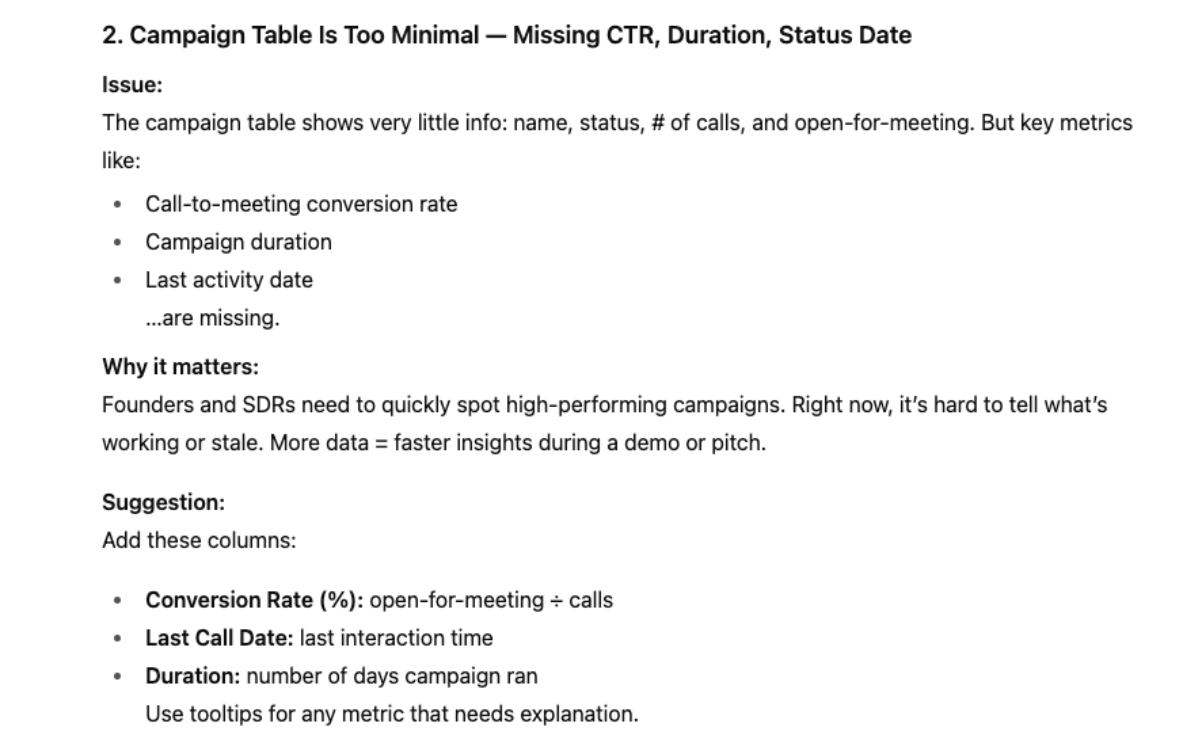

Chat GPT 4 - the old model answers

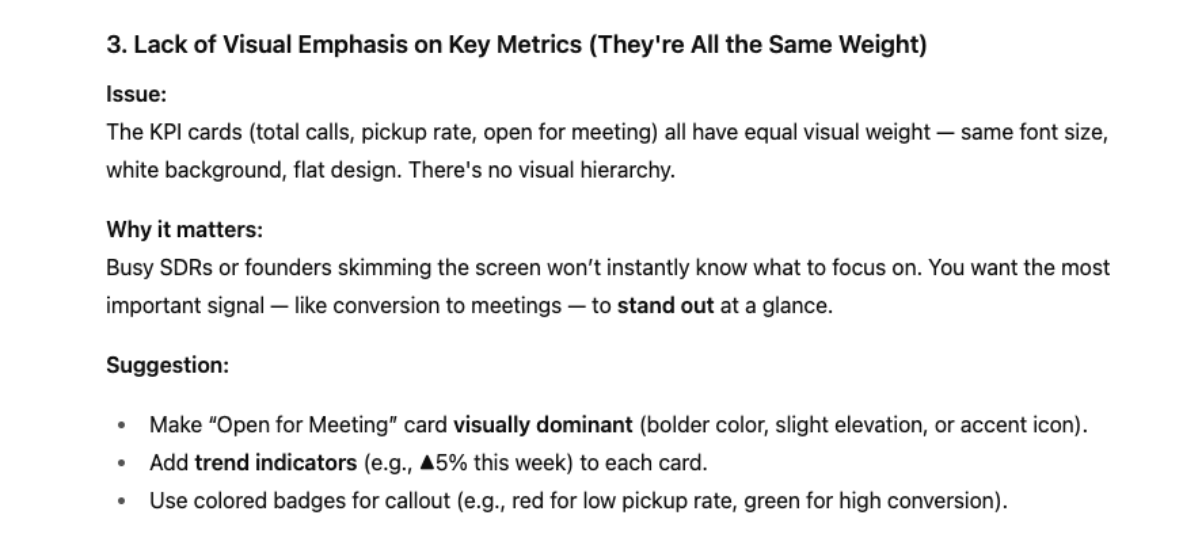

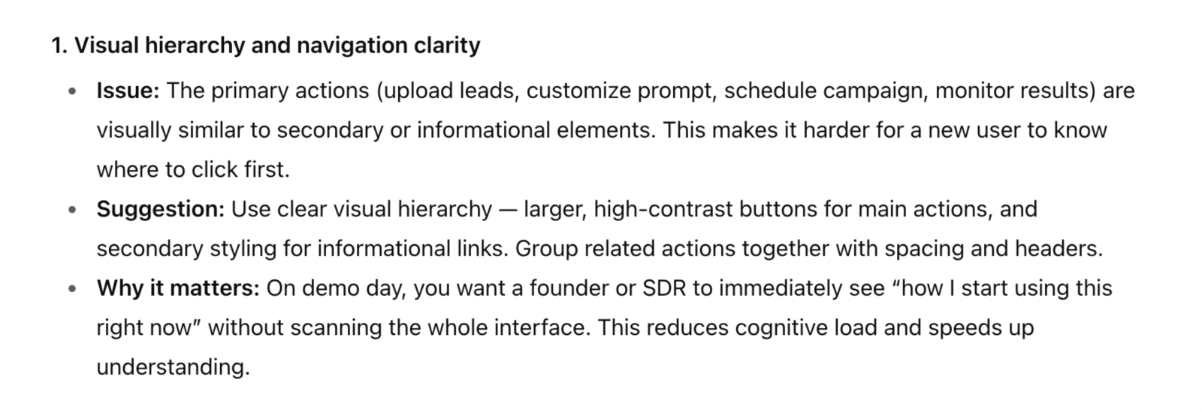

Chat GPT 5 - the new model answers

The older model did what it usually does. It picked up on the obvious UI tweaks. Add missing columns. Adjust the table. All useful, but it read like a checklist you could give any junior designer. No real sense of why those changes matter in this specific product or moment... The new model really took it further. Grasping the app’s purpose, the mindset of SDRs and founders, and the fact that this was a demo scenario. Its suggestions weren’t just “make it look better,” but “make it click instantly” for someone seeing it under pitch pressure. That sold us!

The moment GPT stopped summarizing and started persuading

We all love a bold, beautifully written brand manifesto, but let’s be real, no exec is reading a 2-pager when they’re hopping between calls. So, we gave GPT‑5 something we genuinely care about: the draft manifesto for our new AI product, Prompto.

If a new marketing lead had 60 seconds to get aligned, what would they need to know — and what would they do next? Here’s what we gave it:

Summarize this brand manifesto in 3 short sentences that capture the core purpose, tone, and positioning of the brand — like you’re briefing a new marketing director who just joined the team and has 60 seconds to get aligned. Then suggest one practical next step based on the brand’s direction (e.g., campaign, messaging audit, new page idea). Keep the tone confident and no-nonsense.

Chat GPT 4 - the old model answers

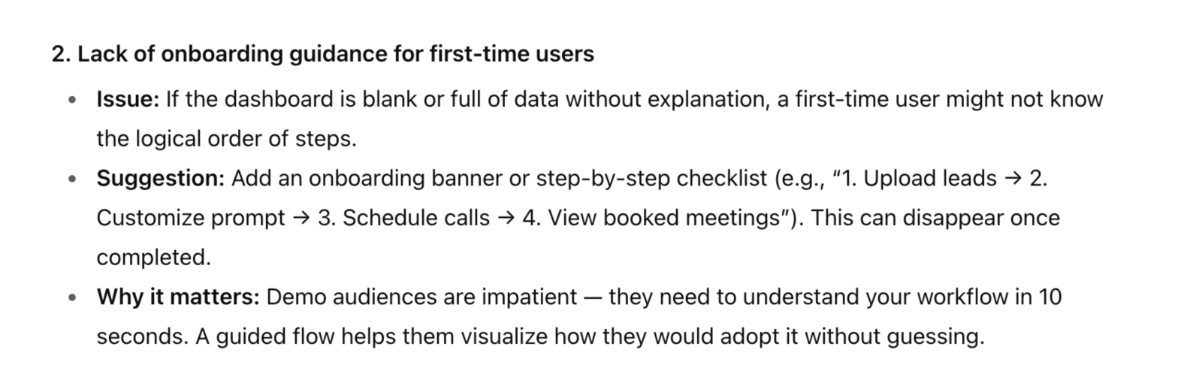

Chat GPT 5 - the new model answers

In short: GPT-4 summarized it. GPT-5 sold it.

The old model did what we asked, it trimmed the fat, kept the “fast, clear, human” promise, and called it a day. But it stayed on the surface... The new model really felt different. You can see the extra layer of reasoning in how it framed the manifesto. It now knows how to hook attention, hit the core point, and package it. Even the “next step” it suggested showed that spark — not human-level creative, not yet, but getting closer than we’ve seen before! And that’s the part that’s both exciting… and just a little bit scary.

Our devs used GPT every day. GPT-5 still surprised them.

Our dev team’s been leaning on ChatGPT for a while. It could spin up decent boilerplate, knock out small functions, and help untangle a few bugs. But we all knew the pattern: once the task got too complex, it would start guessing. Context would slip, variable names would change mid-stream, and you’d end up stitching things together yourself. Three, four iterations later — sure, you’d have working code, but it was never “one and done.”

GPT-5 broke that pattern in week one! The same tickets that used to come back as half-built now arrive fully wired, API calls, database setup, edge cases handled, without us needing to babysit it through the process.

And when it comes to debugging, the difference is night and day. With GPT-4, you’d get a decent hunch about where the problem was. With GPT-5, it’s like having someone follow the bug across files, narrate exactly why it’s breaking, and then push the fix before you’ve even asked. The workflow feels less like “AI-assisted coding” and more like pairing with a senior dev who already knows the codebase.

But not every area got the upgrade

If there’s one thing our marketing team kept grumbling about with the old model, it was social visuals. GPT-4 could analyze, summarize, and write. But when it came to pulling something creative out of the text and shaping it into a visual concept, it fell flat. It either repeated chunks of the copy or came back with vague “put this in a nice graphic” ideas that no designer could actually run with.

So, for our last test, we gave GPT-5 the blog you’re reading right now and told it to turn it into a LinkedIn carousel.

Prompt we used:

"Here’s the full text of our blog. Create a LinkedIn carousel concept based on it. 5 to 7 slides max. Each slide should have a short, punchy headline (max 8 words) and 1–2 lines of supporting copy. Keep the tone bold and confident, with the same style as the blog. Suggest a visual concept for each slide (icon, photo, illustration, chart). Ensure there’s a logical flow from the first slide to the last, ending with a clear CTA for engagement. "

This is what the new model gave us

It did a slightly better job of pulling out the right content for the slides (sharper headlines, more relevant points), but when it comes to actually building the visuals, it’s the same story as before. It still chops images mid-text, sometimes ignores the brief, and the first version is almost always the best you’ll get. Try to tweak it, and things usually go downhill fast as it gets confused.

So no, we weren’t blown away here. The creative thinking is definitely more useful overall, and it’s why we tested this use case in the first place, but in terms of execution, this is one area where the upgrade didn’t land.

To sum up

At this point, everyone’s using ChatGPT. It’s as normal as opening Google. And we’ve all heard the buzz around GPT-5: better reasoning, sharper context handling, even more natural voice capabilities. We haven’t tested the voice part yet, but one thing we can confirm from our own work is this: the creative thinking has leveled up, big time.

We’ve moved past the days when it would just summarize or rephrase. Now it connects dots, shapes outputs with the audience in mind, and comes back with ideas. It still misses details and needs a human to polish the edges, but the jump in context-awareness and “thinking before writing” is hard to ignore.

And honestly, we can’t wait to see what’s next, where this goes, how fast it evolves, and what kind of workflows we’ll be testing a year from now!

Subscribe for smart monthly tech updates.